Blogs

Novel Optical measurement of VR headset tracking performance

OptoFidelity worked in co-operation with University of Jyväskylä, Department of Physics. Our co-operation was related to academic research in the area of HMD testing technology. Our interest was to find new optical measurement technologies and methods for VR headset tracking performance. As an end result, we developed a novel technology for testing HMD´s. If tracking performance of HMD is poor, it will effect drastically to end user experience. OptoFidelity has been working with user interface testing for years. As HMD´s are getting more and more popular, we believe better testing technology is needed as well.

One of the most popular topics in today’s smart device industry and research is the development of virtual and augmented reality (VR/AR) headsets. State-of-the-art, room-scale implementations utilize multiple cameras and sensors to find the position and orientation of the user’s head in the surrounding space. This is called six degrees-of-freedom (6DoF) tracking. Simultaneous Localization and Mapping (SLAM) algorithms, familiar from robotics, are also utilized to make the headset better adapt to its surroundings by recognizing walls and other obstacles. Qualcomm, for example, has implemented SLAM in its new mobile processor [1].

The quality and accuracy of the head tracking are key contributors to the virtual reality experience. Bad performance of the tracking may cause nausea or simply undermine the credibility and immersivity of the virtual reality experience. For the development of the devices and the content, an objective way of assessing the behavior of tracking is necessary. The high quality of the tracking may be quantified by observing e.g., the latency between the user’s motion and the respective update of the display content (motion-to-photon latency), jitter (random shaking of the content) or drifting.

There are several possible ways of testing the tracking performance. Given access to suitable APIs of a headset, one may record and investigate data from the headset’s tracking system, graphics stack or some other components. Another example is application-to-photon latency measurement, where the graphical content is changed. The respective change of the display is observed by an external sensor (such as a color sensor or a camera), and latency between the two events is measured.

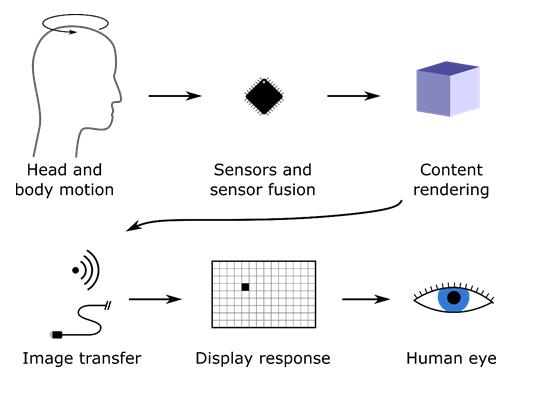

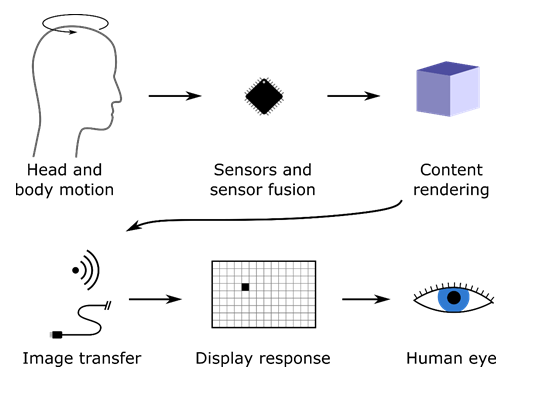

Figure 1: Illustration of the “motion-to-photon pipeline,” i.e., parts of the VR/AR system involved in translating the user’s motions to content and display updates, creating the virtual reality experience.

Ultimately, however, the translation of motion to display updates is what matters to the user. The measurement methods discussed above do not capture this kind of end-to-end behavior. OptoFidelity has developed a novel VR/AR tester for end-to-end measurement. It consists of a robot that actuates the headset, worn on a head-shaped jig, and a camera that captures the display content frame-by-frame and performs computer vision analysis. The content motion and robot motion profiles are compared to find the motion-to-photon latency. This measurement method captures the total, accumulated latency of the VR/AR system, as illustrated in figure 1. In figure 2, our first product for motion-to-photon latency measurement, the OptoFidelity VR Multimeter, is shown. VR Multimeter is utilizing the described novel HMD testing technology.

Figure 2: OptoFidelity VR Multimeter measures motion-to-photon latency in one degree of freedom. Its part of OptoFidelity HMD testing technology portfolio.

Capturing images of a VR display is not a simple task due to the way that the displays work: practically all VR displays have a low pixel persistence, meaning that the display is on only for a short period (typically a few milliseconds) for each frame. Therefore, the image capture must be accurately synchronized to the display refreshing. The approach taken by OptoFidelity is a custom smart camera, consisting of an image sensor, a microcontroller and a color sensor. The color sensor is pointed at the display and sampled at high speed by the microcontroller, which detects the rising edges of the display illumination. The microcontroller then triggers image exposure and reads out the image. Part of the development of this camera is described in a master’s thesis [2] by our employee Sakari Kapanen.

The machine vision algorithms used in the VR measurements are also implemented on the microcontroller. For the 1DOF motion-to-photon latency measurements, the motion of the content is detected by an optical flow algorithm. For more complex measurements, a set of detectable objects are placed in the virtual world and shown on the headset. The camera detects the locations of the virtual objects in the captured 2D images. From these 3D-2D point pairs, the pose of the virtual camera, and thus the pose estimated by the headset’s tracking system, may be calculated. In the thesis [2], this algorithm was mathematically analyzed. A particularly important observation was that planar arrangements of target points don’t work very well for the estimation of 6DOF pose due to the ambiguities between e.g., X rotation and Y translation (and vice versa) in the 2D image. The research guided us to add off-planar points to the target pattern.

Overall, measuring the motion from the device’s display by a custom machine vision camera has been found to be convenient. Comparing e.g., the motion-to-photon latency of a set of different VR headsets is very easy because no intrusive access to the device is required, and swapping the headset is basically all that the operator must do. The integrated color sensor also enables monitoring of the frame intervals and persistence, frame by frame. More sophisticated 3DOF and 6DOF measurement platforms are under development, and similar measurement methods, including the camera, are applied there.

The full academic study can be found from University of Jyvaskyla, Department of Physics publications. In case you are interested in our other postings and learn more about HMD testing technology, please follow our blog with the tag VR or visit our VR testing solution pages.

References

[1] https://www.qualcomm.com/news/onq/2018/01/18/snapdragon-845-immersing-you-brave-new-world-xr

[2] Sakari’s thesis https://jyx.jyu.fi/handle/123456789/57875

Written by