Blogs

Unveiling Display Defects: Enhancing Quality Assurance with Automatic Testing

When considering the user experience of the smartphones, characteristics such as display resolution, brightness, sharpness, contrast, uniformity, and color accuracy are important. All these properties, with the exception of the resolution, may have deteriorated on a pre-owned smartphone. Depending on the display technology, OLED or LCD, the quality of the display gets poorer over time due to aging in different ways. For example, OLED displays suffer from burn in, which means that statically displayed brightness patterns can get burned into the display so that they can be seen on a blank display or in most severe cases even on top of other content. Burn in can also happen unevenly on different color channels leading to color distortion throughout the whole display.

LCD displays, on the other hand, may suffer from aging of the backlight components or the liquid crystal substances leading to nonuniform brightness or color. Physical wear and tear, as well, damages the quality of the display.

Media

This article provides real-life examples of different defects found in pre-owned smartphone displays and how automated testing can assess and catch these defects objectively. The display images that are shown below have all been produced by robots designed for testing the smartphone functionality.

Overall Availability

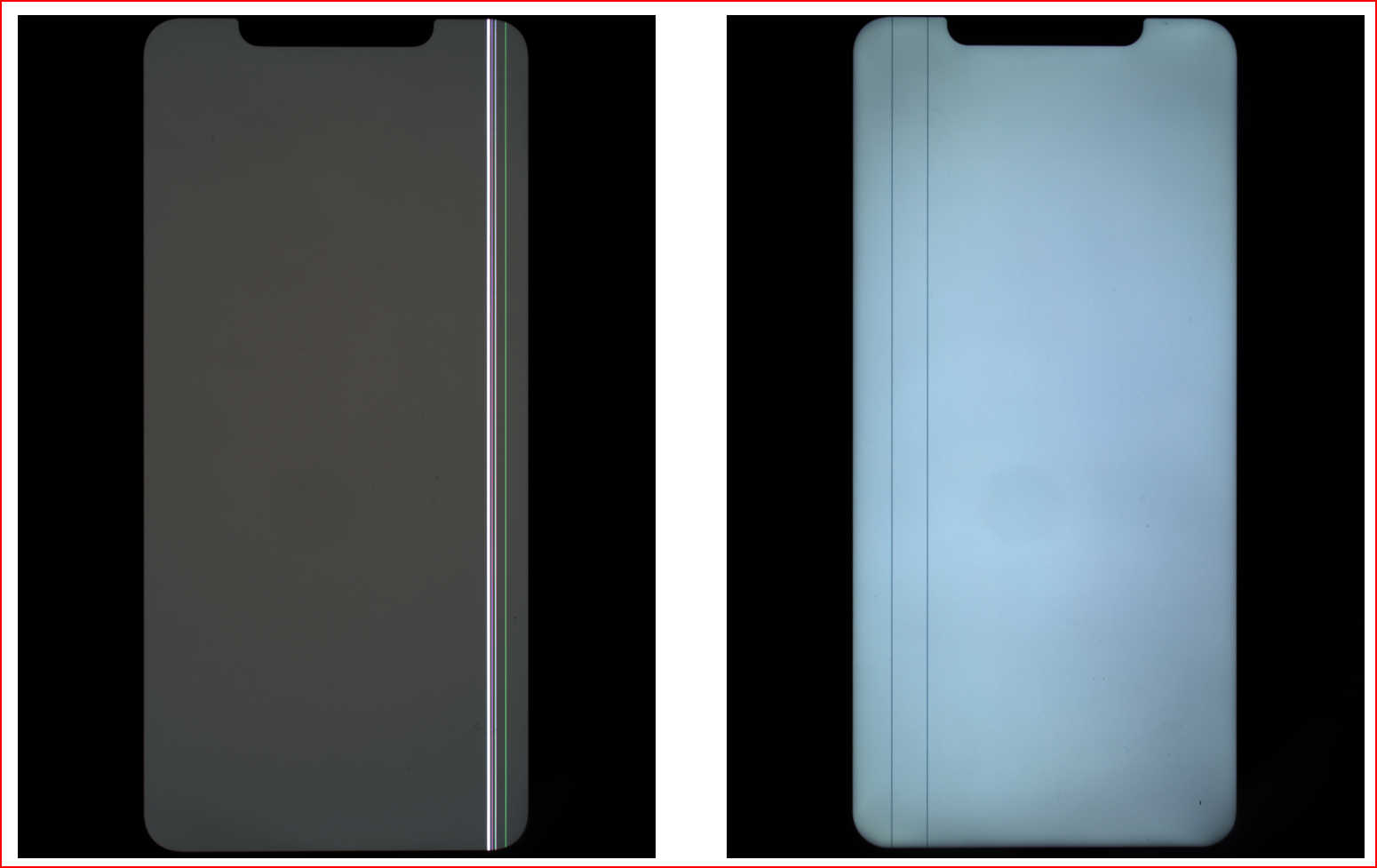

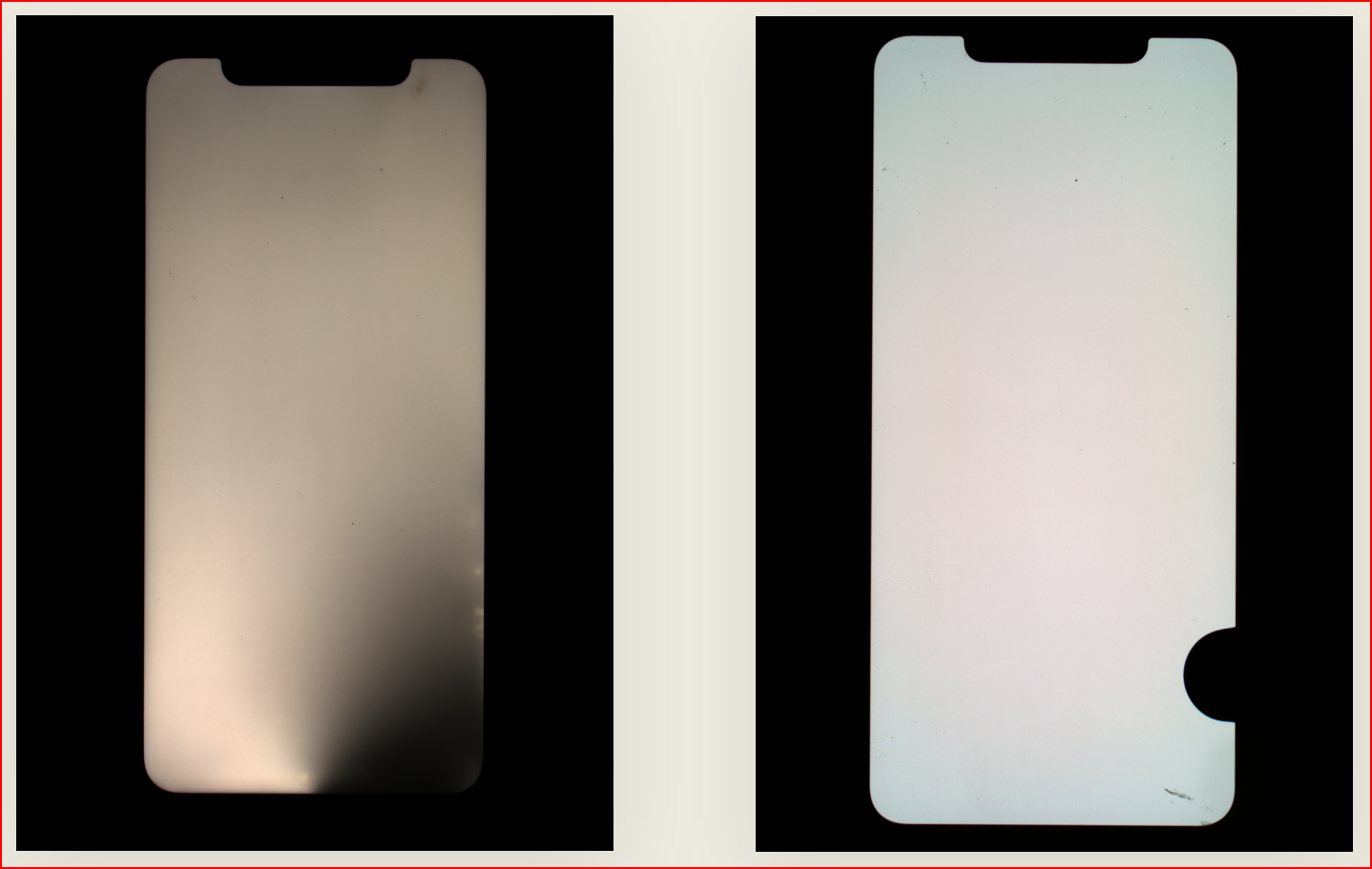

The first set of examples consists of random collections of images that show the displays of used smartphones with different levels of wear and tear. First image represents displays of LCD type and the second of OLED type - all representing the same make and model (LCD and OLED are of course different models) having all-white screen. Even though the details of the displays cannot be seen in these collections, it is apparent how wide the range of tint in the display color can be.

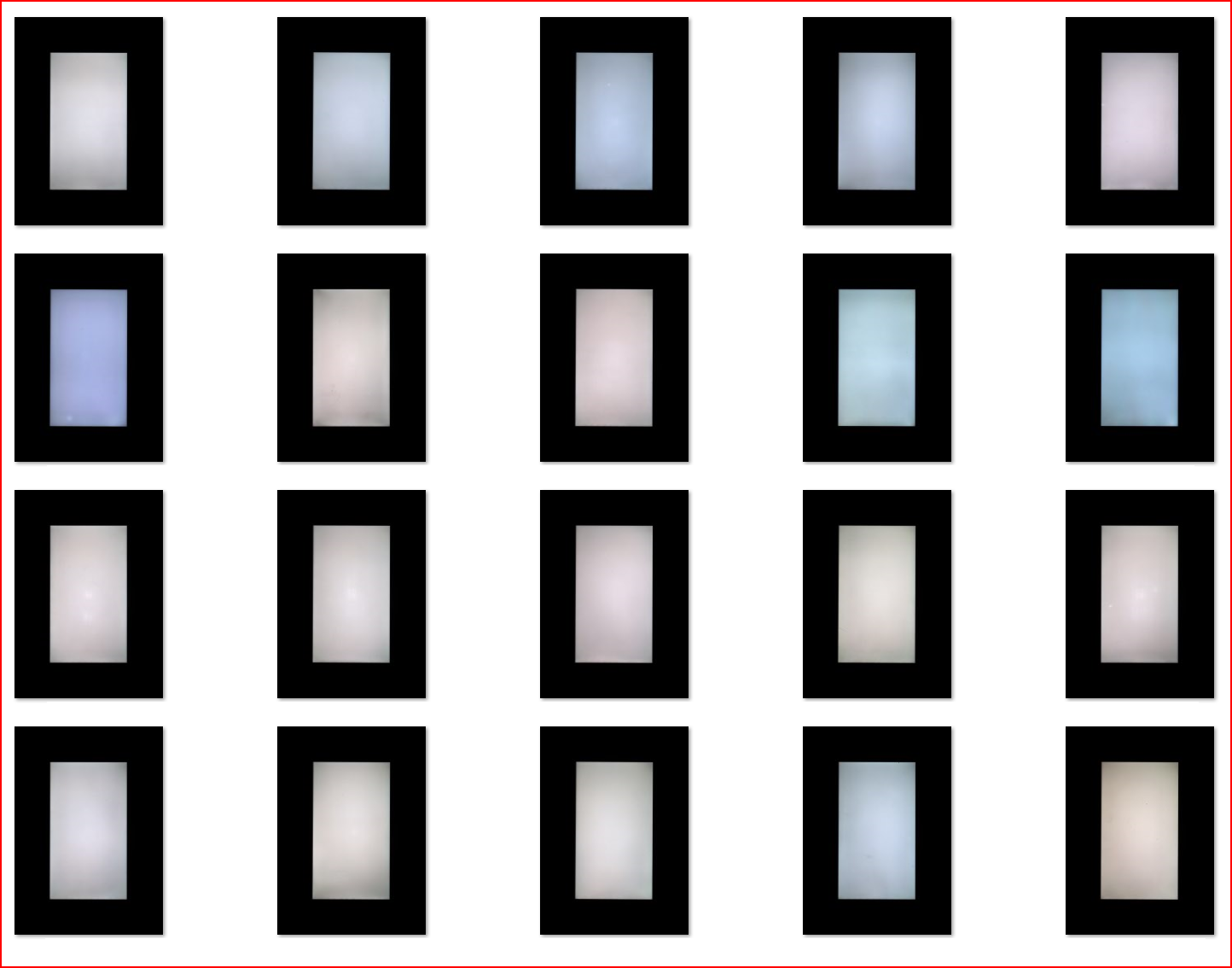

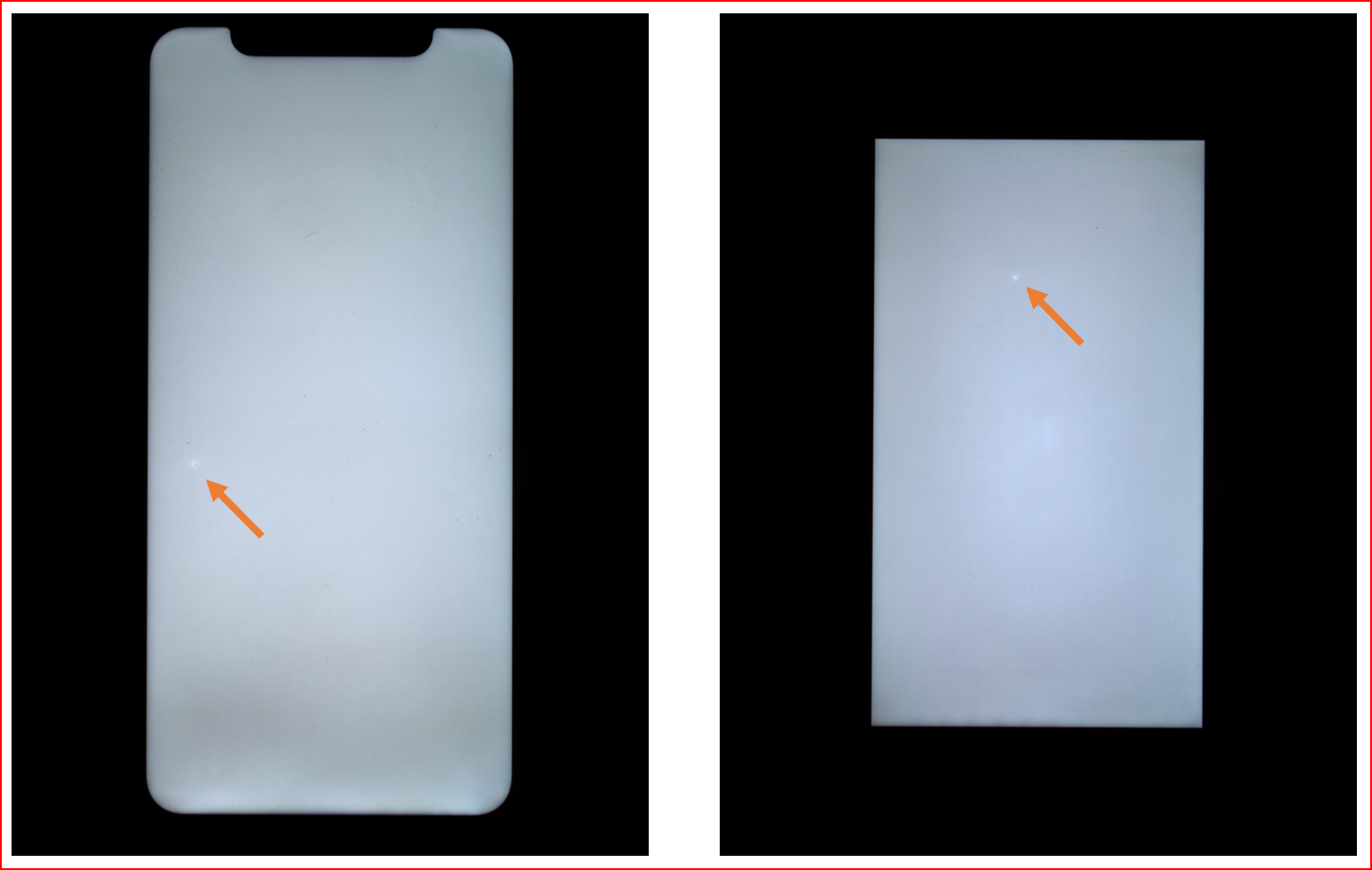

Bright Spots

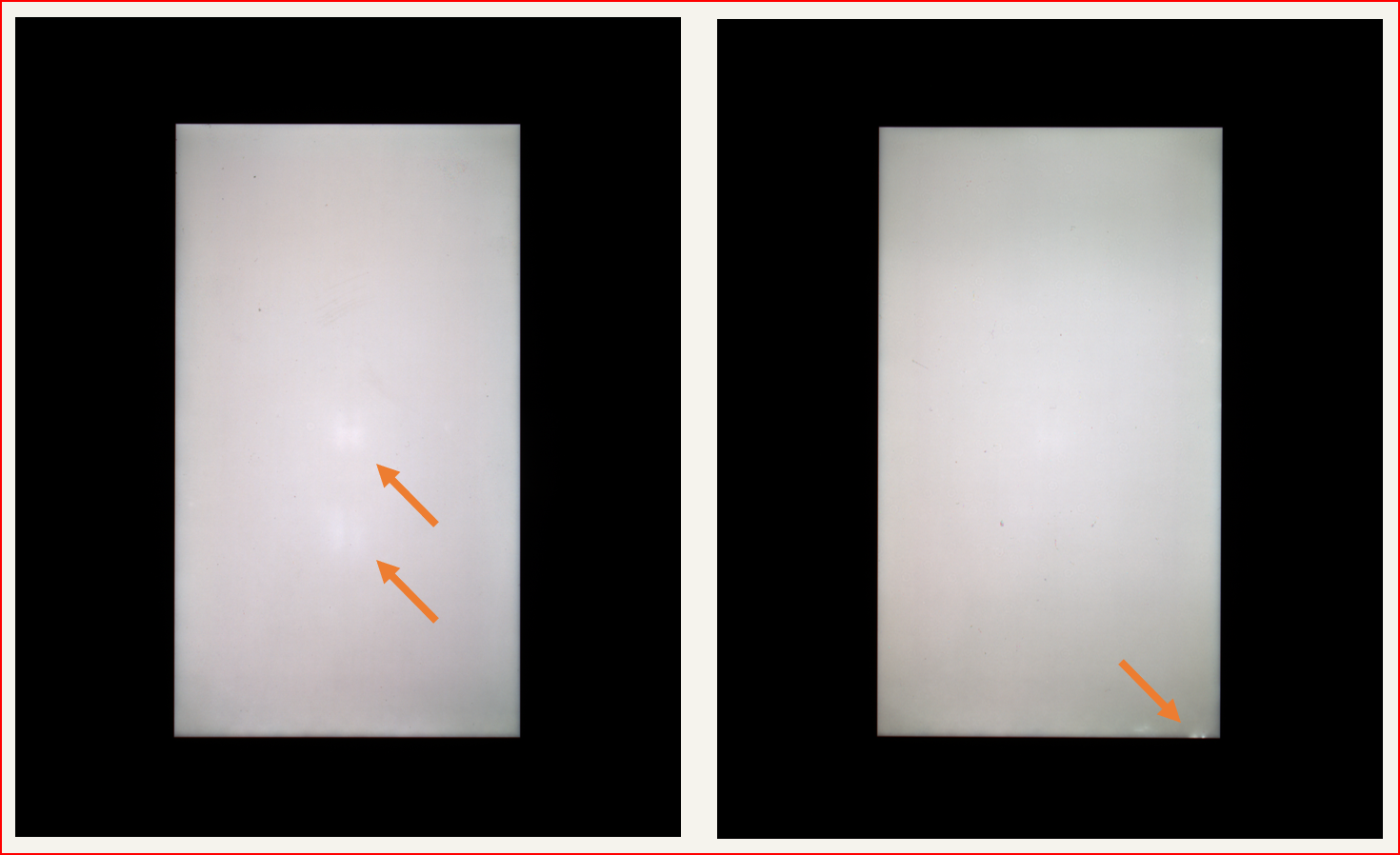

These images show typical bright spots in LCD display.

Row or column defects

These two examples show typical column defects.

Color distortion

These examples show color distortion in an OLED display - pink in the center and green on top and bottom.

Blemish and light leakage

The left image displays bright blemishes in the middle, and the right image shows backlight leakage in the bottom right corner.

Backlight defects and black areas

Here are examples of LCD and OLED defects. The image on the left shows an example of an LCD defect: some backlight LEDs are bad. The one on the right shows an example of an OLED defect: part of the display is totally inactive.

Keyboard burn-in

Below are two examples of burned-in keyboards. These examples are discussed in more detail below in the Automated Testing section.

Manual testing

Manual testing happens by a person looking at the blank display (with white, black, red, green, and blue screens) at different brightness levels and tries to see any defects. One can use some white balance/color reference material to achieve better absolute brightness or hue assessment. The success of manual testing depends a lot on the experience of the testing personnel and how much time there is available to inspect the device. Subjectivity is the main challenge for the sustained quality of the testing. The more there are technical instruments involved in the testing process, the less the testing can be called manual.

Automated testing

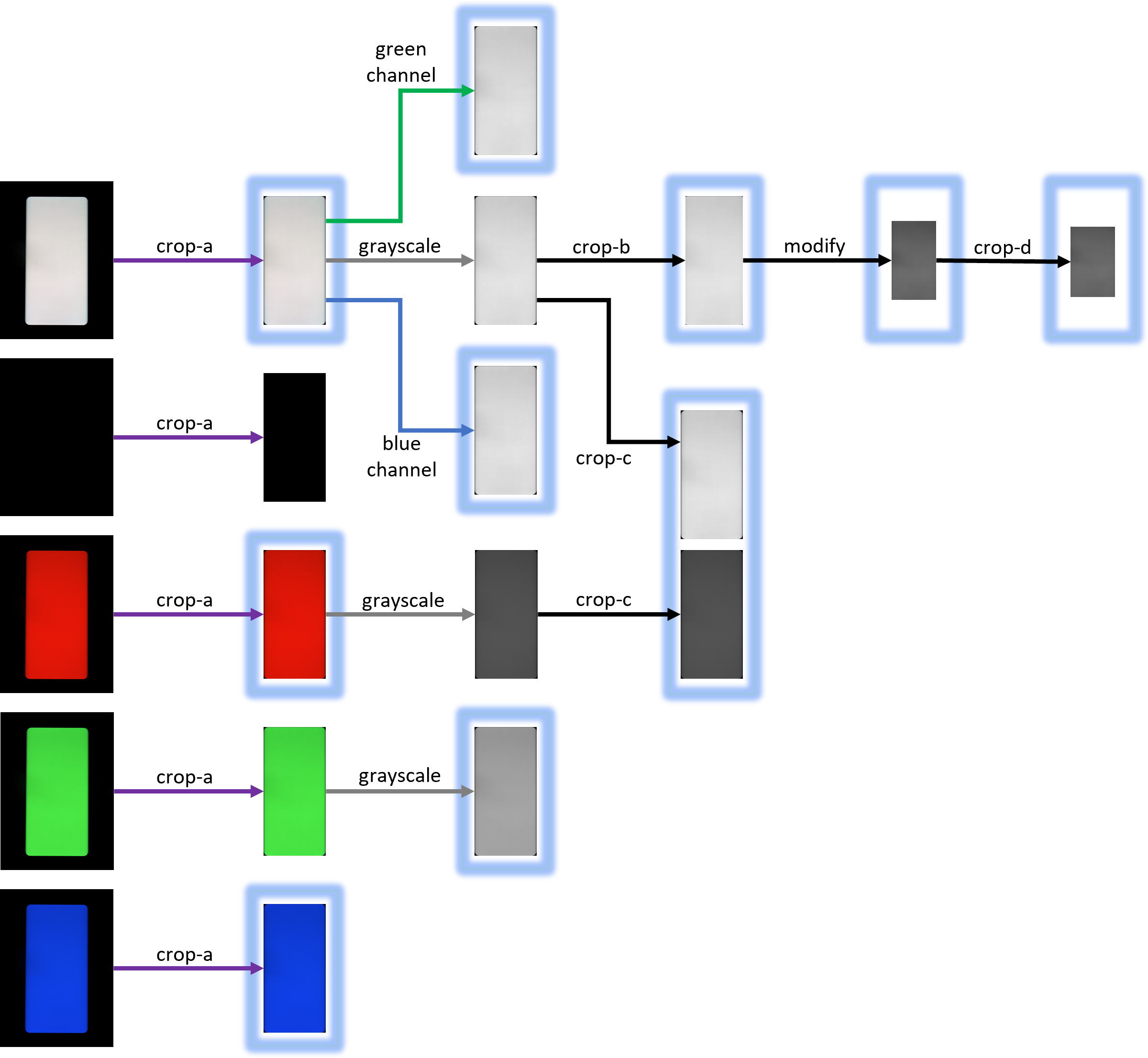

The first image collections shown at the beginning of this article show how the displays look like when the smartphone is presenting an all-white screen. However, in automated testing individual color channels and even possibly the black screen are imaged, and their contribution to the defect analysis is addressed as seen appropriate. The behavior of the automated testing can be adjusted with system configuration settings. An example flow chart below illustrates how different analyses can use different color channels.

As it was shown above, the displays can be defective in many ways. Because of that, the automated testing can consist of a number of (roughly 10-20) different analyses specifically tailored to catch certain types of defects. Depending on the business logic and focus area of the enterprise that needs to utilize automated testing of second-hand smartphones, the collection of analyses and their sensitivity can be tailored to their specific needs.

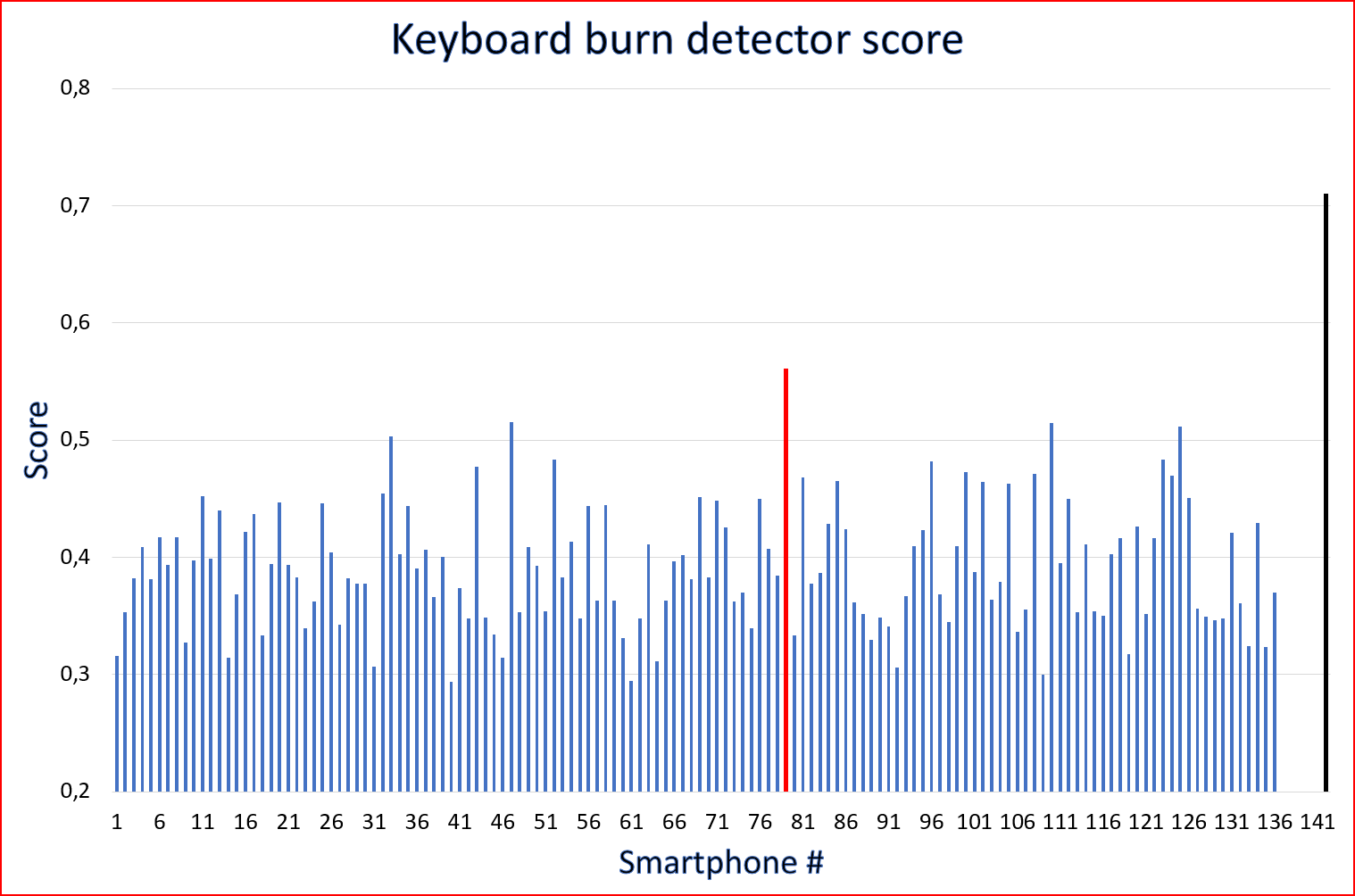

Let’s take the detection of keyboard burn-in as an example. Two smartphones with a keyboard burned in were shown above. One is so severely burned that catching the defect should be quite straightforward, both manually and in an automated way. The other, however, might slip through manual inspection. Automated analysis produces a number, a score, that acts as an estimate for the severity of the keyboard burn-in. Below is a diagram showing the scores for 136 smartphones of the same make and model. Typically, for this model, the score value is in the range 0.30 - 0.52 for devices that do not have visible burn-in. In this example, it is true that 135 devices did not have visible keyboard burned in. The one having a score 0.56 (the red bar) represents the one shown in the example above. So, having the adjustable detection threshold somewhere between 0.52 and 0.56, this - very faint - defect could be detected without having any false detections. For comparison, the severely burned in device (the black bar) gives a score of 0.71, even though the absolute number is not quite comparable to the other scores as this device is of another model.

To learn more about our automated test system for pre-owned smartphone functional testing FUSION visit our product page: OptoFidelity FUSION

Feel free to contact us directly to get more information about our solutions: Contact us.

Written by