Blogs

Measuring Head-Mounted Display’s (HMD) Motion-To-Photon (MTP) Latency

End consumer experience with new technologies and products is paramount for their successful adoption. Head-mounted displays (HMDs) such as Sony, Pico, DPVR, HTC Facebook's Oculus, and others promise an immersive experience in augmented and virtual reality. For delivery of the promised experience, many factors are critical including motion-to-photon (MTP) latency. Poor performance of motion-to-photon latency leads to spatial disorientation, motion sickness, and dizziness. In this post, we explore the importance of motion-to-photon latency, how to measure it, and optimize HMD performance.

The end consumer expects a comfortable and enjoyable experience from a HMD. HMDs' functionality and performance are subject to the product's optical design, sensors, and controllers' features and configuration. The overall experience relies on certain specifications that can be listed under the comfort and immersion categories. Bernard Kress in his book Optical Architectures for Augmented-, Virtual-, and Mixed-Reality Headsets mentions common comfort-related specifications including weight, angular resolution, contrast, IPD coverage, and others. Some of the immersion-related specifications include FOV, motion-to-photon latency, world locking, HDR, and active dimming.

Motion-to-Photon (MTP) Latency

Virtual, mixed, augmented reality is mostly about immersion, creating a physical presence in a non-physical world. Latency is the difference between action and reaction. In AR/VR/MR, motion-to-photon latency is defined as the amount of time between the user's head movement (action) and its corresponding display output reflections (reaction) on the HMD. HMD users should not experience a delay between the physical movement and the display output to have the best immersion. Otherwise, the sense of physical presence in a virtual world would be lost. MTP latencies of more than 20 ms are experienced and cause spatial disorientation and dizziness, referred to as VR sickness or motion sickness. A low MTP latency also improves the video see-through comfort, as well as hologram stability. According to Heinrich Fink, a good immersive experience requires an MTP latency of less than 5 ms for AR and less than 10 ms for VR applications.

For these reasons, reducing MTP latency is critical in providing the best immersive experience to HMD users. Since it is a device parameter, HMD manufacturers and HMD content creators, and application developers are interested in measuring the MTP latency. MTP latency is affected by different components of the HMD, such as the sensors, SOC, display, and software.

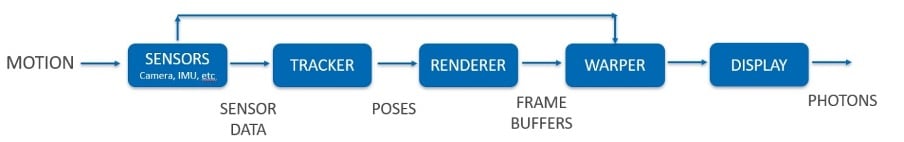

Figure 1. Processing chain of Motion-To-Photon (MTP)

The MTP measurement can be considered one of the most important parts of evaluating a head-mounted display's overall performance. There are various ways of measuring the MTP latency. The popular HMD performance tester, OptoFidelity® BUDDY, uses a novel method to measure the end-to-end latency developed by OptoFidelity®. In its essence, OptoFidelity® BUDDY measures the outcome of the above processing chain. Also, check OptoFidelity® BUDDY video.

Measuring Motion-to-Photon Latency with OptoFidelity® BUDDY

OptoFidelity® BUDDY is an automated measurement system for headsets' performance testing for AR/VR/MR/XR applications. It is used for R&D, product development, calibration, benchmarking, device, and content quality testing. OptoFidelity® BUDDY is popular among consumer electronics, gaming, automotive, defense, and healthcare industries. OptoFidelity® BUDDY includes 3DOF robotics, calibrated optical engine modules, sensors, and an SW package.

Figure 2. OptoFidelity® BUDDY

OptoFidelity® BUDDY wears the HMD on its head-shaped fixture, and the display content is captured frame-by-frame by its camera, and the SW package performs various computer vision analyses.

The system measures the predicted motion-to-photon latency by comparing the content's motion and robot's motion profiles while rotating the HMD on a different rotational axis one at a time. The system also measures the unpredicted MTP latency when a sudden movement occurs. In that case, the HMD is kept still on all orientation axis for a defined time then one of the rotating axes is rotated at high speed and acceleration.

During the MTP latency measurement, images are captured frame by frame from the HMD's display. Image capturing is managed by a novel smart camera system with a patented automatic display frame rate synchronization and image sensor, color sensor, and a microcontroller. During the image capturing, the color sensor detects the rising edges of the display illumination. The microcontroller triggers image exposure and reads out the image. The robot's precise motion detection is managed by an encoder counter device synchronized to the same clock as the camera.

OptoFidelity® BUDDY uses a unique combination of absolute marker constellation for AR/VR/MR content and real-world robot pose comparison to measure true end-to-end motion-to-photon latency.

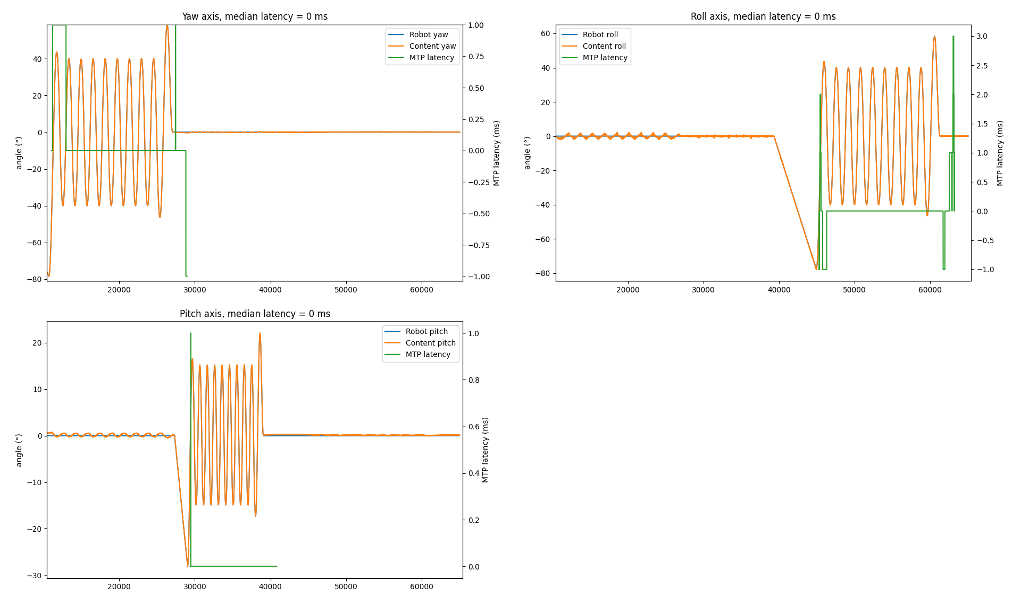

Figure 3. Absolute marker pattern

Comparing the content's motion and robot's motion profiles is made possible by observing the differences between the robot encoder readings, which are sampled at specific intervals (motion), and content orientation recorded by the camera at display refreshes (photon). For more information, you can check our earlier blog "Novel HMD testing technology – Optical measurement of VR headset tracking performance". Now let us look at a motion-to-photon latency measurement result of a commercially available head-mounted display.

Figure 4. Motion-To-Photon (MTP) latency measurement results

During the MTP latency measurement, mean absolute jitter and peak jitter in degrees, pose errors in all orientation axes, and initial latency are also measured as described in our earlier blog "Comparing VR headsets’ tracking performance".

Initial latency refers to the motion-to-photon latency at the start of the movement. During continuous movement, movement prediction and other optimizations often reduce the continuous motion-to-photon latency to near zero. When a motion is sudden, there's usually a more significant latency at the start of the movement. This can be measured by suddenly moving the robot and comparing the time offset between robot and encoder positions.

OptoFidelity® BUDDY is also used to measure:

- World locking performance

- Tracking accuracy and repeatability

- Jitter (motion and stationary)

- Drifting

- Overshoot and undershoot

- FPS measurement

- Signal to photon

OptoFidelity® designs and manufactures several test automation robots and measurement systems. You can see some of our products from this presentation.

We deliver standard as well as customized solutions. Do contact us to discuss and find the right solution for your needs

Written by